How do we decide if a machine deserves to be called “intelligent”? British computer pioneer Alan Turing proposed an answer half a century ago. He suggested a kind of parlour game in which a computer tries to pass itself off as a human being by answering questions (typically through text, as its physical appearance would be a giveaway). Turing called it the “imitation game.” Today, we call it the Turing test. In 1950, Turing speculated that by 2000 “an average interrogator will not have more than a 70 per cent chance of making the right identification” – that is, computer programs would stymie the judges 30 per cent of the time – after five minutes of questioning. (A couple of years later he made a more conservative prediction, saying that it would be 100 years before a machine passed the test.)

Back in 2012, I was a judge in a “Turing test marathon” held at Bletchley Park, England, the site of Turing’s code-breaking work during the Second World War. The winning program (called a “chatbot”) was able to fool the judges 29 per cent of the time, just a smidgeon below Turing’s 30 per cent threshold. But there’s a catch. The winning bot didn’t pretend to be an adult native English speaker; rather, it emulated “Eugene,” a 13-year-old Ukrainian boy. Not surprisingly, both Eugene, and the Turing test in general, have met with criticism.

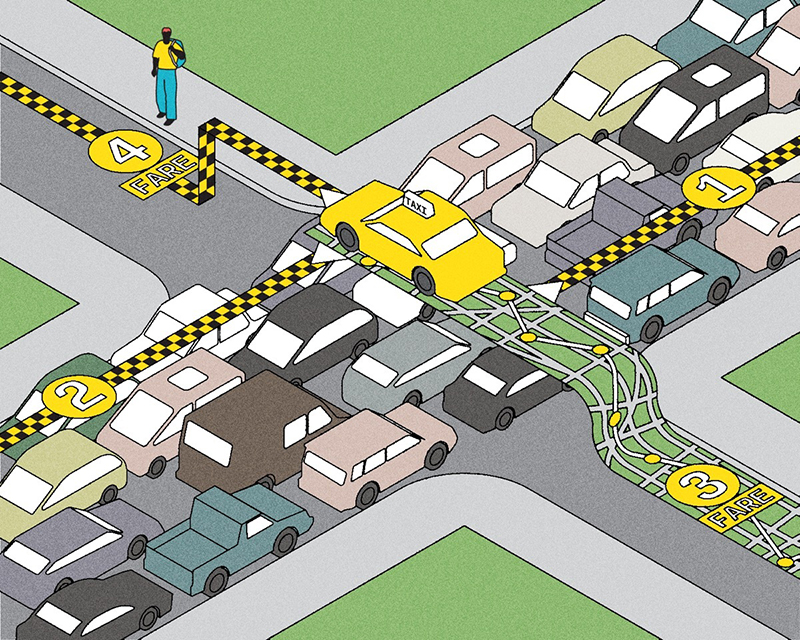

And so Geoffrey Hinton, a U of T computer scientist and world leader in neural network technology, suggests a more sophisticated test: given a photograph, produce a sentence describing what it shows. A child can do it – and now machines are on the verge of doing it too, thanks to Hinton’s work on neural networks. The strength of this “visual Turing test” is that it’s much harder to fake one’s way through it. A chatbot, after all, can alternate between parroting its conversation partner, and changing the subject (“That’s cool that you’re from Canada. Do you like hamburgers?”) But you can’t get from pixels to words unless you really see, and understand, what’s in an image. “It’s very hard to say a machine doesn’t understand what’s in the image, if it can tell you what’s in the image,” says Hinton.

Recent Posts

People Worry That AI Will Replace Workers. But It Could Make Some More Productive

These scholars say artificial intelligence could help reduce income inequality

A Sentinel for Global Health

AI is promising a better – and faster – way to monitor the world for emerging medical threats

The Age of Deception

AI is generating a disinformation arms race. The window to stop it may be closing