The students come at all hours, slipping into the cluttered computer lab between classes to work on the circuits or tweak the design. By late October the basics of a robot are there: two wheels on a circular frame with a corona of infrared sensors. Sometime early in November – on one of those nights when the clock hands seem to spin too quickly – they look at their trundling, buzzing mass of wires, circuit boards and batteries and christen it The Big Bad Wolf, though this robot clearly can’t blow anything down. And it certainly can’t do what it’s supposed to do, which is navigate around a warren of miniature rooms and hallways to find and extinguish a burning candle. Not tonight. Not by a long shot.

It’s a little more than a week until The Big Bad Wolf must be ready for competition. Robert Nguyen, chief programmer with the U of T Artificial Intelligence and Robotics Club (UTAIR), slouches over a keyboard, punching in lines of code to program the robot’s microcontroller to communicate with a computer mouse. A mouse works by detecting motion across a surface and translating the motion into computer code; Nguyen hopes that this mouse, re-purposed and mounted between the robot’s wheels, will help guide the robot, and the club, to victory.

The club’s mechanical team, Chris Moraes and Zoe Shainfarber, are unscrewing the undercarriage for the fourth time, hoping to find a way to make the robot move in a straight line. The no-budget wheels aren’t helping: Moraes and Shainfarber made them from electrical tape and dowelling. Out in the hallway, Sandra Mau, the club president, sits sprawled on the floor over a large piece of white cardboard. Mau’s task tonight is to translate the event’s guidelines – a set of rules and measurements so exacting they might have been written by a team of corporate lawyers – into a scale replica of the competition course, which is essentially a four-room miniature bungalow, without the roof.

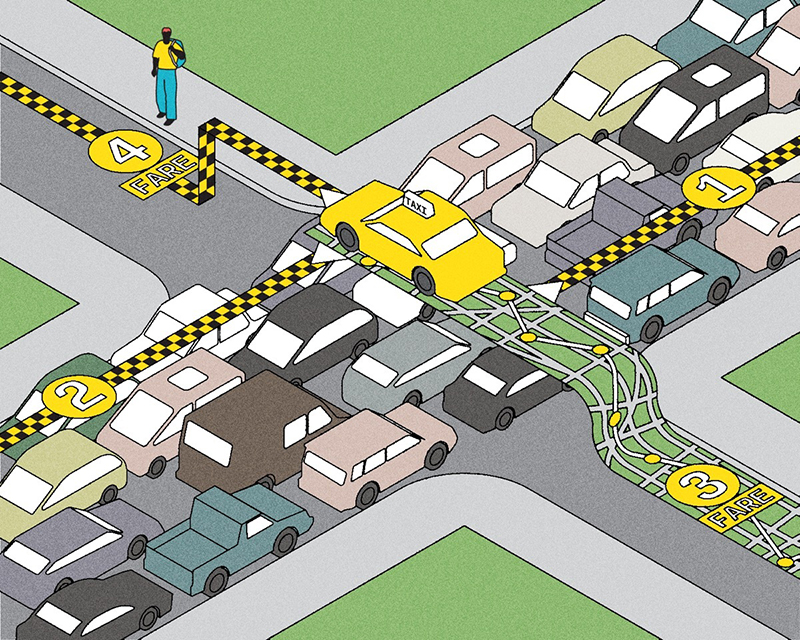

This will be UTAIR’s first year at the Eastern Canadian Robot Games. The annual event, held at the Ontario Science Centre in Toronto, draws students and serious amateurs from across North America. The games range from robot sumo wrestling to “line following” (picture souped-up Tonka Trucks steering their way along impossibly twisty black lines laid out on the floor) to firefighting, the most difficult challenge. Getting around the competition course is hard enough. What’s worse, the robots have to find a burning candle placed in a corner of one of the rooms and put it out – without knocking the candle over. Competitors get extra points if their robot returns to the starting position after completing its mission. And the faster the robot performs, the more points the team scores.

It will take all of the students’ skills – and a good deal of luck – to get the robot to do what it should. Many of the club’s members are deluged with other demands. Nguyen, 22, a student in the biomedical option of engineering science, and Shainfarber, 22, in the aerospace option, are swamped with work at MDA Space Missions, the Canadian aerospace company that in January won a $154-million (US) contract to help NASA fix the Hubble Space Telescope. Both students, who have finished their third year of studies, are completing a 16-month internship at the Toronto-area company as part of their engineering degree. They have been dashing downtown from Brampton on their off-hours to work on the robot. Mau, 23, a fourth-year aerospace student, and Moraes, 21, an engineering student in the fourth year of a nanotechnology option, have both been cramming for fall mid-terms.

Nobody in the club has built a firefighting robot before. And the team hopes to make the robot without spending more than a few hundred dollars. There will be no frills such as sonar navigation systems and laser-cut components, which have become standard on the competition circuit. As the tournament creeps closer they don’t know if the robot’s navigation system will work, or if its sensors can detect a lit candle, or whether they’ll be able to make the machine move in a straight line. They’re a long way from completing a successful test run.

After screwing the undercarriage back on, Shainfarber and Moraes set the robot down on the lab floor, gingerly, as if teaching a baby how to walk. At the flip of a switch the robot spins out in a wide circle. Then it rams into a table leg and stops. Shainfarber takes the collision in stride. Asked whether the robot is going to be done on time, she replies, “That depends on what your definition of ‘done’ is.”

***

In many ways the problems that the students have to solve are the same dilemmas that have inspired and frustrated roboticists for years. Their machine must be able to find its way around. It must be able to make decisions – when to turn, how much to turn and when to switch on its fan – and it must be able to “see,” or at the very least detect a flame.

Canada’s robotics industry is still small compared with robotics in the United States and abroad, but it has had its share of successes. Researchers at Canadian universities have developed robots to inspect coral reefs and to work as tour guides in museums. Engineering Services, a Toronto firm founded by U of T engineering professor Andrew Goldenberg, has developed robots for bomb and hazardous materials disposal, as well as for biotechnology and manufacturing applications. And in the 1970s the most famous of Canada’s robotics companies, Spar Aerospace, developed the Shuttle Remote Manipulator System, the mechanical handling device better known as the Canadarm. (Professor Goldenberg, then a recently graduated engineer at Spar, helped develop it.) In 1981, the device was sent into space aboard the Space Shuttle Columbia, and the Canadarm and its descendants have proven their worth as mobile work platforms for astronauts, for deploying and retrieving satellites, and even for potentially life-and-death shuttle repairs. Spar’s robotics division was sold in 1999 to the company that is now MDA Space Missions, where Nguyen and Shainfarber are interning.

While MDA is primarily concerned with practical applications of robot technology, several U of T professors and their students are investigating robotics at a more theoretical level. Sven Dickinson, vice-chair of the U of T computer science department, has been trying to help machines “see” for the past 20 years. Sight is a key hurdle that researchers must clear before they have any hope of developing thinking, learning, high-functioning robots, like the ones we see in Hollywood movies.

The problem is that computers have trouble identifying anything that doesn’t precisely resemble what they’ve been programmed to see. Scientists can teach computers to recognize particular objects but not categories of objects. They can teach a computer to recognize a telephone, for example, but only a phone of a certain shape and size. (A child’s Mickey Mouse phone would confuse a robot, if it hadn’t already been taught to recognize it as a phone.) Scientists are approaching this problem of computer vision in two distinct ways. Researchers such as Professor Dickinson are creating mathematical models to express the geometry of certain objects, and then transferring those models to computers equipped with video cameras. A simplified description for a human being might indicate that a human is composed of a cylindrical torso and two cylindrical legs and two cylindrical arms and a sphere for a head. Each of those parts, in turn, is broken down into sub-parts, with models to explain that a leg is composed of two moving cylinders joined at the knee. With luck, a machine seeing all these parts can determine that it must be looking at a human being. But what if the machine sees a human being from the side or from above? What if its only view is of a head sitting on a set of shoulders?

Another approach to computer vision addresses the problem by storing and matching two-dimensional images of objects, taken from all angles: a circle with a long strip underneath it, for example, could be an aerial view of a person. However, it could also be a mixing bowl on a rectangular cutting board – or a designer lamp, or the logo for the London Underground. “Building systems that can categorize objects the way humans do it, effortlessly, remains one of the great open problems in computer vision,” says Dickinson.

While Professor Dickinson wants to help robots see, Reza Emami, a senior lecturer with engineering science’s Institute for Aerospace Studies, hopes to develop intelligent controllers that allow robots to make decisions by mimicking human behaviour. Emami’s research observes how humans make decisions or perform actions and tries to distil that experience into sets of rules and systems that can help machines follow the same logic. So in the case of a real-life firefighting robot, an ordinary controller would tell a robot to rush in and spray the fire for all it’s worth. An intelligent controller, by contrast, would gauge the fire’s temperature, the wind and the source of the fire, and then sort the data to determine the best way to fight the fire.

Parham Aarabi, an assistant professor in U of T’s electrical and computer engineering department, hopes that robots will one day be able to communicate with spoken language just as easily and accurately as humans do. Aarabi, who holds the Canada Research Chair in Multi-Sensor Information Systems, directs the university’s Artificial Perception Lab. “In a typical environment where there’s going to be noise, there are also going to be obstacles – robots have to be able to find their way around,” he says. “They have to be able to understand what a person tells them, even if there’s music playing in the background.”

Aarabi and his colleagues are developing a speech-enhancement aid that significantly reduces background noise so that robots can distinguish a speaker’s words from the din. Another current project is trying to enable teams of robots to communicate by talking to each other in rudimentary English. And in another effort, Aarabi hopes to outfit search-and-rescue robots with radio equipment that can help them navigate noisy disaster sites. Like fires, for example.

***

The team looks as if it has been through hell. At 9:45 a.m. on Competition Sunday, just a few hours before the games begin, Nguyen, Shainfarber and Roger Mong, 21, a third-year engineering student and the team’s circuits whiz, huddle over their robot in the competition’s crowded preparation area, testing, programming and calibrating as fast as they can. They are punch-drunk, speaking in the clipped, stuttered syllables of the sleep-deprived. Shainfarber and Nguyen have not been home in days. The team has had fires of its own to extinguish.

A few days before the competition, the team discovered that their navigation system, built from the computer mouse, wasn’t going to work. They spent the next 48 hours trying to create another system out of handmade bumper pads. “We’re using touch sensors,” Nguyen explains. “To try to follow the wall.”

“It’s kind of slow,” Shainfarber adds. “You’re hitting the wall a lot. But we couldn’t possibly in a day-and-a-half put together a completely new navigation system.”

Last night the team met what should have been its final challenges, writing new algorithms for the navigation system and calibrating the robot’s infrared sensors so it could distinguish a flame from ambient light. But after spending most of the night accomplishing these tasks, this morning they discover that the competition course is lit with a bank of high-powered spotlights. The lights are so bright that their robot’s sensors can’t distinguish ambient light from flame. It thinks everything is on fire. The Big Bad Wolf’s tiny fan is huffing and puffing, but it’s blowing at nothing but air.

At 11 a.m. two entrants from Grand Rapids, Michigan, announce that they’re pulling out of the competition: like UTAIR’s robot, their robot uses infrared sensors. (The more seasoned competitors generally use ultraviolet sensors, which can be calibrated so as not to be tripped up by ambient light.) The U of T team doesn’t give up. They’ve got three tries. Maybe one will work.

Nguyen kills the infrared sensors and writes some last-minute code for the robot. Competitors can choose to have a white disk placed under the burning candle. The floor of the competition course is black. The team hopes that the sensor will be able to detect the contrast between the black floor and the white disk. If the robot stumbles onto the disk, the contrast might be just enough to trigger the fan.

The first trial begins well enough. The robot wheels out from the starting area and creeps slowly but surely along a wall. When it reaches a corner, though, it turns too far and gets stuck. Nguyen doesn’t hesitate. He picks up the robot and shuts it off before carrying it to the preparation area backstage. He has some more tweaking to do.

An hour later they try again. This time the robot rolls perfectly around the corner, bumping, then correcting its steering, bumping, correcting. It drives into the room with the candle, edging forward until it hits the white disk. The fan switches on. The Big Bad Wolf sweeps right, then left, then directly at the flame. It blows out the fire.

Team U of T does not win the competition – that honour goes to a circuit veteran from the U.S. with not one, but two firefighting robots. But the team does not come last, either. A couple of robots couldn’t find the candle at all.

The U of T students are considering a competition in Hartford, Connecticut, this spring and another in California, but they figure that to stand a chance they’ll need to make some changes to their robot. They’ll probably adapt sonar for their navigation system and some better sensors to detect the flame. “I think we’re going to have to bite the bullet and actually buy some technology,” says Shainfarber. “If we want to compete there’s no point in trying to reinvent –” She stops herself. “It’s not even reinventing the wheel; it’s like knowing that a [round] wheel exists and choosing to use square wheels instead.”

Still, the team is not discouraged. Far from it. “We showed that we could adapt to having lights that completely screwed up our whole plan – of everything,” says Shainfarber, smiling. “We really came together to get all the parts working.”

Chris Nuttall-Smith is a freelance writer in Toronto. He wrote about the U of T women’s mountain biking team in the Fall 2004 issue.

Recent Posts

People Worry That AI Will Replace Workers. But It Could Make Some More Productive

These scholars say artificial intelligence could help reduce income inequality

A Sentinel for Global Health

AI is promising a better – and faster – way to monitor the world for emerging medical threats

The Age of Deception

AI is generating a disinformation arms race. The window to stop it may be closing