Data, data everywhere – but what to do with it all? While today’s technologies are very good at generating data, they’re not so good at analyzing it – especially massive volumes of it. For instance, powerful new telescopes will soon create more data each year than the entire internet. How do we turn all that data into information that can guide discovery in many different fields, from health care to astrophysics?

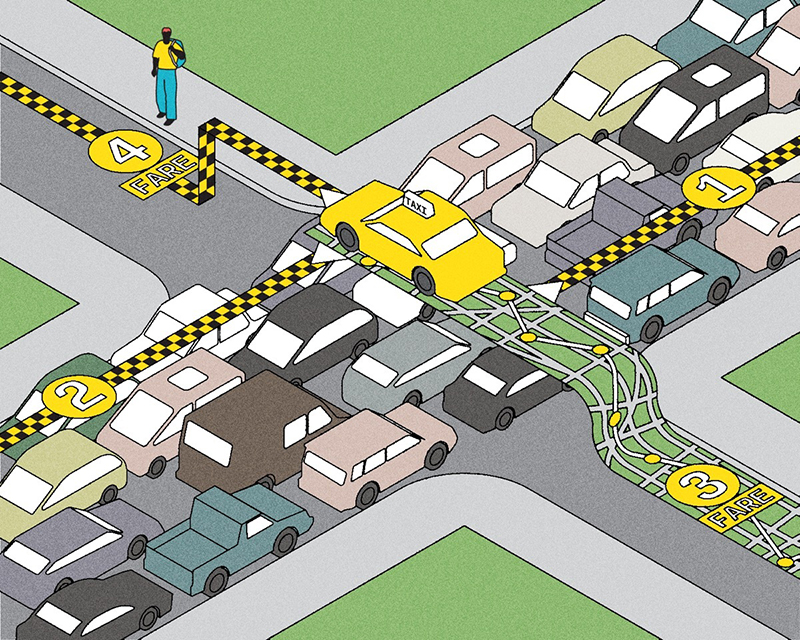

That’s where data science comes in. Data scientists are specialists who identify which real-world questions need answers in a certain field, analyze and validate the relevant data from various sources, and interpret meaning from it. They do this by developing and using mathematical, statistical and computational tools, including artificial intelligence. Then, acting as bridges, they communicate their findings to the experts in the field. For instance, data science could help an oncologist decide which of several cancer therapies would be most likely to benefit an individual patient, an urban planner improve traffic flow in a major city, or an environmental scientist predict the impact of climate change in a particular region.

To this end, U of T launched its new Data Sciences Institute last September, with an aim to create and fund collaborative research teams and connect them with statisticians and other computational experts. Barely half a year old, the institute already has a membership of more than 800. Members include those with a primary appointment at one of U of T’s three campuses or its hospital partners, as well as students, research associates and trainees who will ultimately form the next generation of data scientists. “The Data Sciences Institute will offer so much opportunity for collaboration and integration, in life sciences, physical sciences, social sciences, humanities and other fields,” says Lisa Strug, the institute’s inaugural director and a professor in the departments of statistical sciences and computer science. “It’s a recipe to make huge advancements, blowing open doors across all kinds of different domains.”

To make sure no Canadians are left behind, we must diversify the data we use to develop health-care tools.”

The institute will emphasize two main themes. The first is inequity, which has been an issue in fields such as medical research. For instance, precision medicine uses data generated from genome samples to create tools that can be used to predict risks for specific diseases, discover new drug targets and tailor treatments. Here’s the problem: a large majority of genomes that have been studied come from people of European descent, since the biggest genome-sequencing efforts are based in Europe and the United States. But more than half the residents of Toronto are not of European descent – nor is the vast majority of the world’s population – which means that these powerful new tools may not work as well for them.

Systemic inequities affecting historically oppressed people have fuelled some people’s mistrust of institutions and research, making efforts to diversify this data challenging. Strug, who is also a senior scientist at Toronto’s Hospital for Sick Children, believes one solution is to empower marginalized groups to drive the research process; the institute aims to support research projects with these communities. “To make sure no Canadians are left behind, we must diversify the data we use to develop these health-care tools.”

The institute’s second theme is reproducibility, which is a kind of quality control for research studies. It involves the ability to take a published study and reproduce it using the same computational methods as the original, in order to validate the findings, ensure the research is sound and build upon it. But the original researchers are not always transparent about their approach. “They don’t share the computer code, they don’t share the data they use to build the models, and they don’t share the models themselves,” says Benjamin Haibe-Kains, a professor in the department of medical biophysics and a senior scientist at Toronto’s Princess Margaret Cancer Centre. It’s sometimes possible to reproduce a study without that information, but it’s time-consuming. “What should take you an afternoon becomes a six-month project, and that is a massive waste of resources.”

Sometimes the lack of transparency is due to researchers wanting to protect intellectual property, but often it’s because they simply don’t know how to make their code reproducible. It’s a complex process requiring expertise that many labs don’t have, says Haibe-Kains, who’s co-leading the Data Sciences Institute’s reproducibility theme. To address this, the institute is organizing educational workshops to help labs take the first steps toward making their research transparent and reproducible, which will benefit the entire global scientific community.

Haibe-Kains, who is a cancer researcher, is eager to see how the Data Sciences Institute will link the research world with the clinical world. He envisions a day when a cancer patient and a clinician can confidently go ahead with a specific drug therapy, knowing that a wealth of high-quality data from diverse sources is driving the decision for that particular patient’s age, ethnicity, gender and type of tumour. “Ultimately, when I see that a product of my work will help the patient, that’s going to be the peak of my life,” he says. “I’m super excited to get the data and the predictive models into the hands of the clinicians and ultimately into the hands of the patients, because there’s no reason patients shouldn’t have access to those predictions as well.”

Recent Posts

People Worry That AI Will Replace Workers. But It Could Make Some More Productive

These scholars say artificial intelligence could help reduce income inequality

A Sentinel for Global Health

AI is promising a better – and faster – way to monitor the world for emerging medical threats

The Age of Deception

AI is generating a disinformation arms race. The window to stop it may be closing